Category: Tech

-

Create a dev copy of your WordPress site with WordOps

WordOps is a popular framework to setup and manage WordPress infrastructure, forked from EasyEngine in 2018 when EasyEngine v4 changed their architecture to use Docker containers. This is a quick guide to setting up a development copy of your live WordPress site which can be useful if you’re working on a redesign or testing new…

-

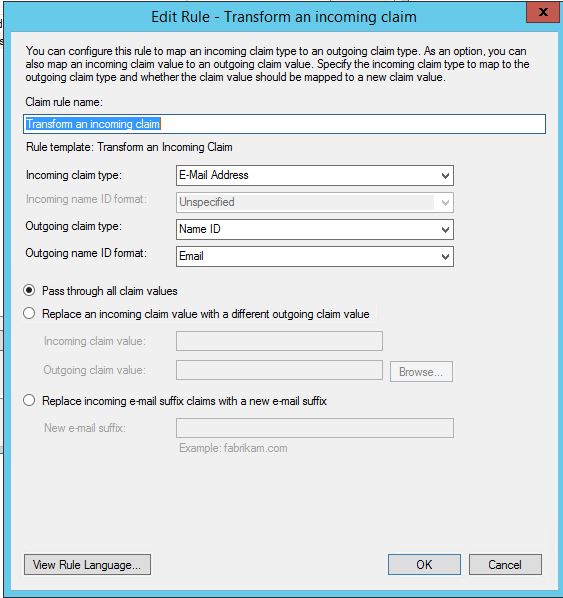

Configuring Atlassian Cloud Single Sign On for ADFS 3.0

Atlassian don’t officially support AD FS with Confluence Cloud – but it is working well now I’ve sorted out the issues I was having passing user’s email address through as the nameId claim. Hopefully these instructions can save you some trial and error. Enable SAML on Atlassian Cloud First off – enable SAML on your…

-

BLF lights not working after switching Asterisk to version 11

Found a strange issue after switching to Asterisk v11, the BLF buttons on our Panasonic SIP phones stopped working. Eventually thought it was worth trying setting the ‘presence server address’ which previously hadn’t been set (and in Endpoint Manager is set to blank) to the PBX and the lights immediately started working. Much simpler solution…

-

802.1X authentication woes with NPS & EAP

Had a frustrating issue with some UniFi APs where clients were not able to authenticate to the Pro models, but OK to the standard UniFis. Running a packet capture on the NPS server I could see many Access-Requests arriving at the server with an Access-Challenge immediately being sent back, but the AP would just keep…

-

KB: User’s print jobs showing as coming from another domain user

We’ve just had a strange problem where print jobs for one of our users were printing out and showing up on the printer as coming from a different username. Normally, probably wouldn’t matter too much, but they use PaperCut account selection – meaning the popups to select the printer account were displaying on the other…

-

Veeam announces free Time Machine equivilent for Windows

Veeam, known for being one of the leading providers of enterprise virtual backups have just announced they will be releasing a free backup tool for desktop users, providing automatic backups to a NAS or other hard drive. Veeam Endpoint Backup FREE looks like it will be a great set and forget solution, allowing both simple file…

-

Next phase of UFB rollout for Greymouth

Chorus have updated their maps in the last week to show UFB is now available in more areas in Greymouth. It is still unclear which ISPs will be providing UFB in Greymouth – Snap is probably your best bet currently for home and basic business use; DTS can do business connections and apparently HD are…

-

Roomie Remote IP control for PJ-Link compatible projectors

We finally got our new Epson EB-4650 (unsure on exact model) projector connected to the network this week, allowing me to complete our Roomie Remote setup: controlling a projector, Marantz receiver, DVD and Freeview box. Although Roomie Remote had a one-size-fits-all Epson projector definition, I couldn’t get it working with IP control. Knowing the projector…

-

Dynamics CRM TextaHQ SMS Integration

Over the past six months I’ve been developing a Student Management System based on Dynamics CRM 2011 for one of the new Trades Academies. I’ll talk about why we chose Dynamics CRM in a later post, but this post is about the integration I built with the TextaHQ SMS Messaging service. TextaHQ was attractive for…

-

Security Tip: Automatic application updates with Ninite

This isn’t a free tip, but works well for the networks I manage. One of the challenges for any Systems Administrator is keeping software up to date. I’m not so concerned about actually having the latest version of software so much as making sure if there are any security updates these are taken care of…